EngageEdu

Online learning has become more prevalent in recent years in secondary education. Current online education platforms have begun to leverage AI tools in an effort to better facilitate student learning. But despite these tools, online learning still brings a myriad of challenges that can be combated with AI technology. My team identified that a frequently cited concern with online education is student disengagement. We wanted to address this issue and came up with an AI solution we named EngageEdu.

The goal of this project was to address the problem of student disengagement in online learning by designing a novel AI system. We aimed to leverage machine learning to identify student disengagement, measure student engagement, and provide teachers and secondary students with recommendations that help raise students’ engagement.

Description

UX/UI Designer

Researcher

Role

Alesandra Baca

Yesenia Garcia

Marcus Hanania

Team

We started by conducting background research on student disengagement with online learning, how to define engagement, and how to measure student engagement. We identified several important points:

Studies have found that engagement in online learning is low which directly impacts performance and student’s readiness to advance to the next grade.

Teachers struggle with assessing engagement during online learning and how to combat it.

Student engagement can be defined as the degree of interest, attention, curiosity, motivation, progress, optimism and passion shown by students while learning or being taught.

Key indicators of engagement include gaze, facial expressions, frequency and duration of interaction, previous scores, attendance, and behaviors.

Background Research

We looked at frequently used online education tools that have implemented AI features and found that none of them targeted student engagement. Each of the existing AI features within online education tools either dealt with content creation or personalizing online learning. We identified the following competitors and what they offer:

Khan Academy

Provides AI tutoring and AI lesson planning.

Unacademy

Features an AI generated content creator.

Quizlet

Utilizes AI tutors, AI enhanced question solutions, and AI generated flashcards from students’ notes.

Competitive Analysis

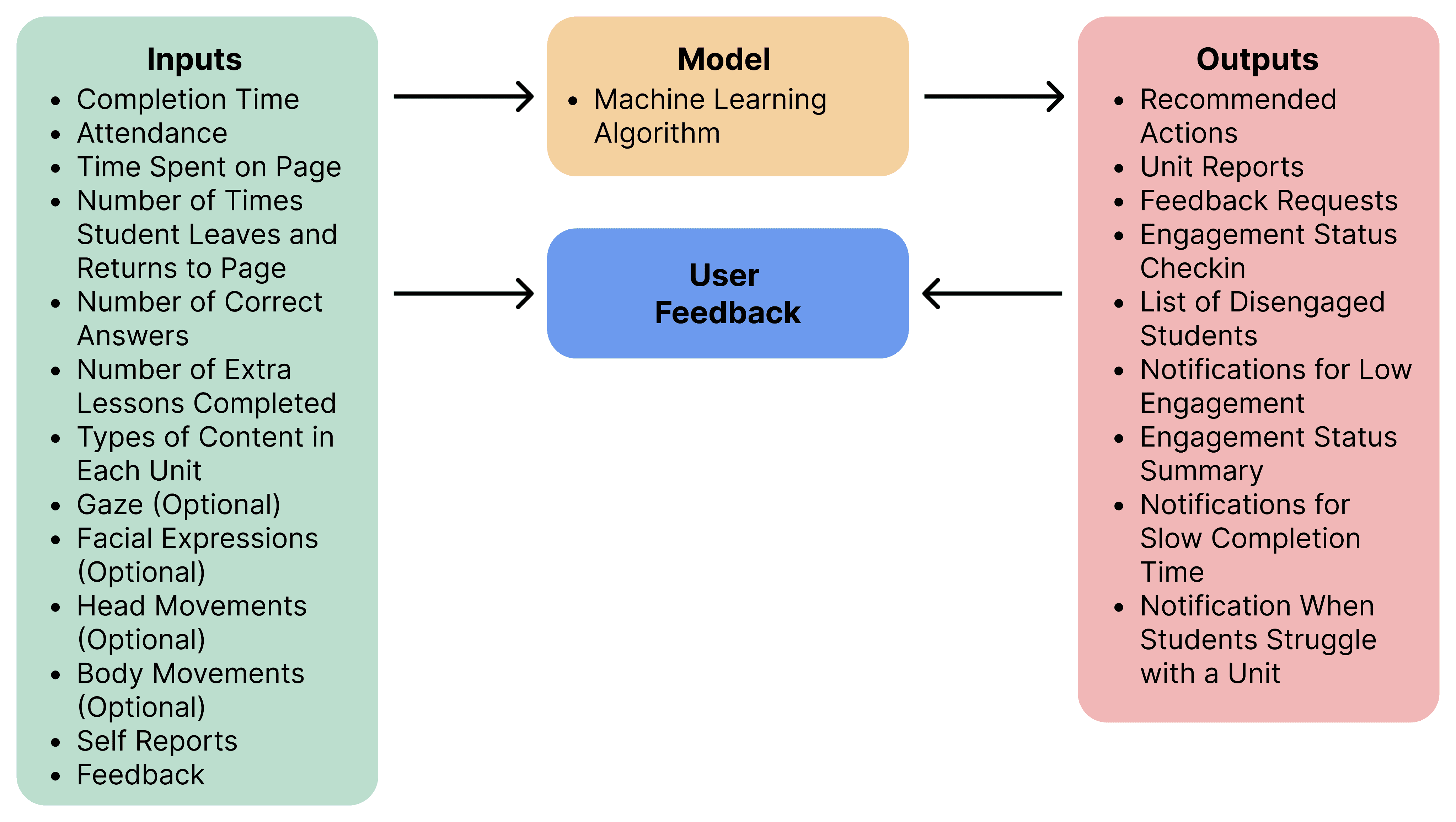

After conducting background research and examining AI tools currently used with online learning platforms, we developed a system schema for our AI application. The schema illustrates the inputs our machine learning algorithm takes in, runs through the algorithm, and the outputs generated.

Schema

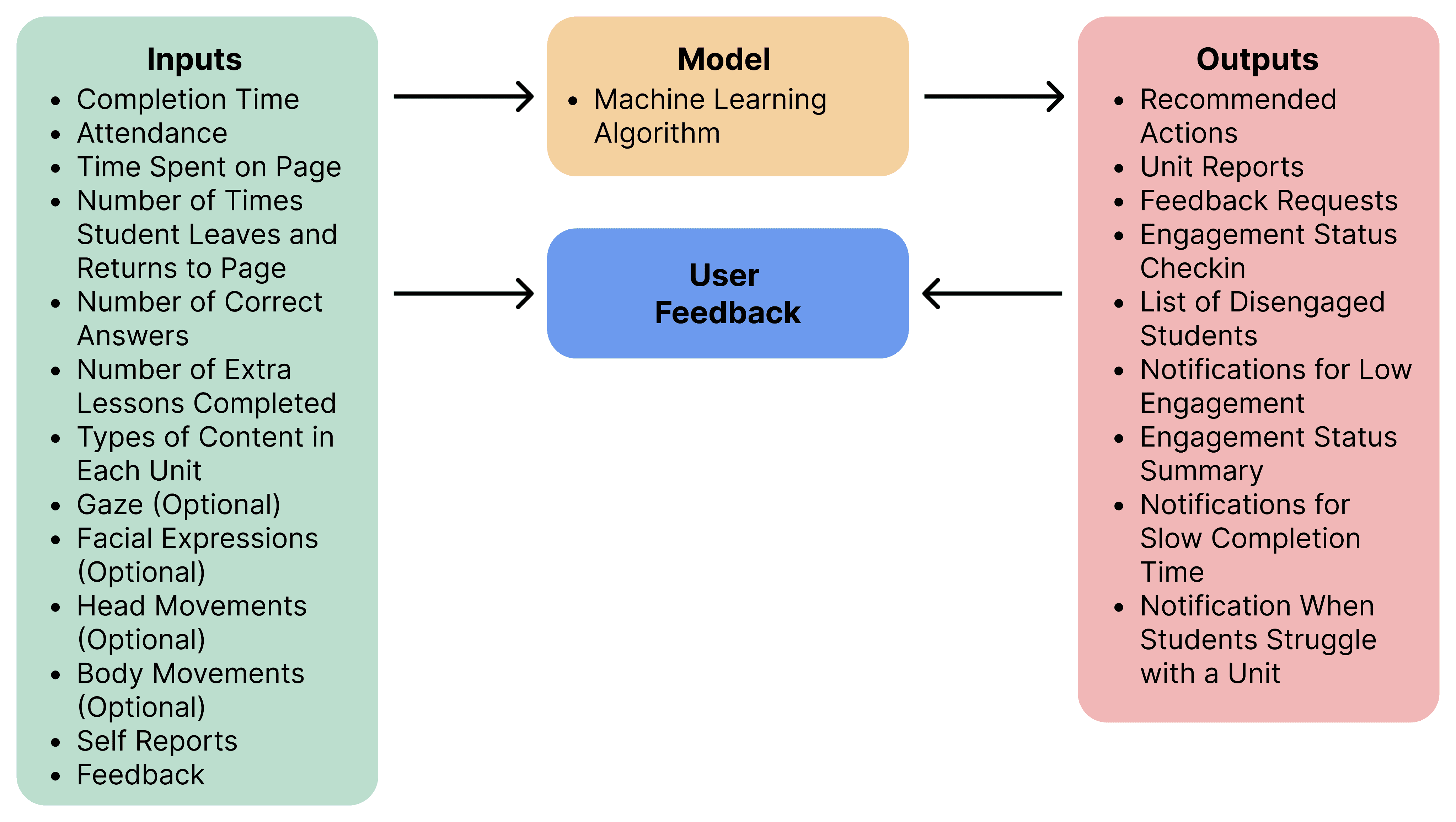

We created several storyboards illustrating scenarios of users’ experience using EngageEdu.

Our first storyboard shows a student who has become disengaged with online learning. The AI detected they are disengaged and asks the student if they are struggling and feeling disengaged. The student confirms they are having trouble and are disengaged. The AI then provides suggestions to help the student which then allowed the student to re-engage and understand the concept.

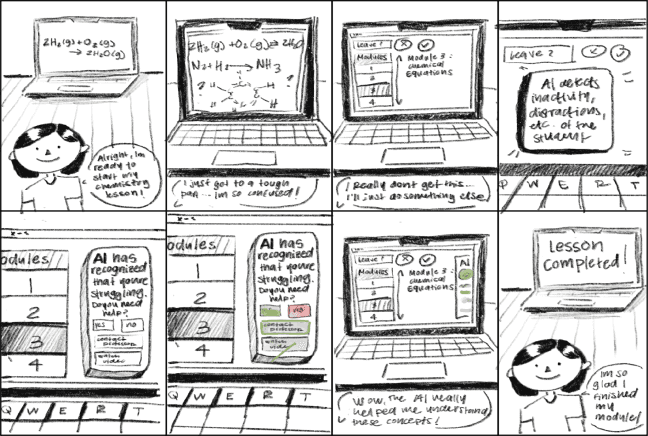

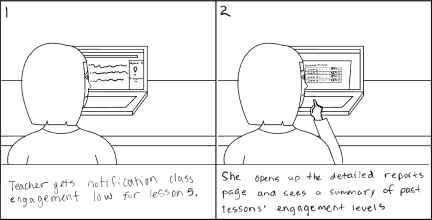

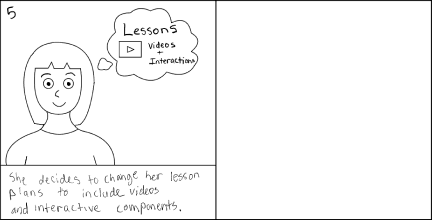

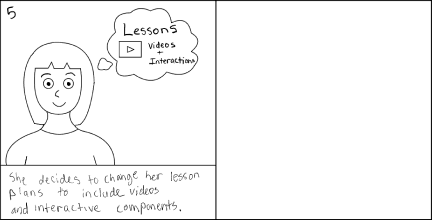

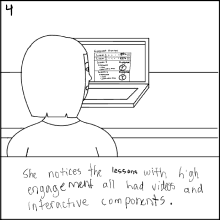

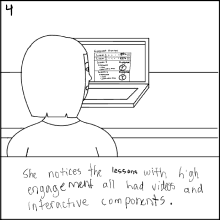

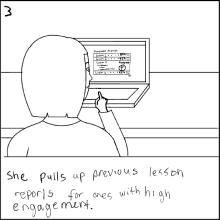

The second storyboard entails a teacher receiving a notification that the class engagement with lesson 5 is low. She opens detailed reports generated by EngageEdu and is able to identify that lessons with videos and interactive components have higher engagement and those like lesson 5 without any tend to have lower engagement. She uses this information to reshape her lesson plans in a way that fosters higher engagement.

Our third storyboard shows a teacher receiving a notification from EngageEdu that a specific student is disengaged and may need help. The AI explains it’s reasoning for why the student was marked as disengaged and asks the teacher to judge whether the student is disengaged based off the reasons given by the AI. The teacher agrees the student is disengaged and decides to accept the AI’s recommendation to reach out to the student to check in. This leads to the student getting back on track.

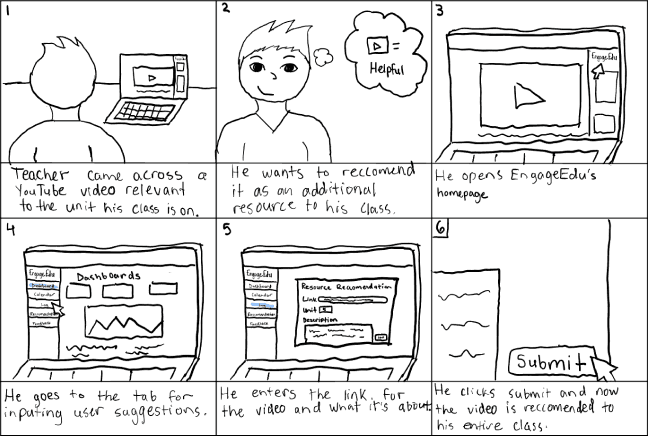

The fourth scenario shows a teacher who came across a video they believe is relevant to the current unit the students are on. They want to recommend it to their entire class so they open EnageEdu’s webpage mode and navigate to the tab for inputting recommendations. They paste the link and type a description of the video then submits the recommendation. Now the video pops up as a recommendation for his entire class.

Storyboards

The next step in the design process was to determine guidelines for designing out human-AI interaction. Our team followed Microsoft’s HAX guidelines for designing our AI’s user experience and decided to prioritize the following guidelines:

Time services based on context

Show contextually relevant information

Support efficient invocation

Make clear why the system did what it did

Encourage granular feedback

Design Guidelines

The solution we developed was an extension that leverages AI machine learning to monitor student engagement and provide recommendations to students and teachers in order to better students’ online learning. We developed a separate version for teachers and students in order to take into consideration the different needs these two user groups have. EngageEdu includes two modes: a side panel mode that can be displayed while using current online learning platforms and a web-page mode that contains more capabilities and details.

The side panel mode includes the following tabs:

Engagement Summary

Allows teachers to see their class’s and students to see their personal engagement scores and the reasoning for the past three units along with a couple recommendations underneath.

Engagement Status

Prompts teachers and AI identified disengaged students to confirm or deny the AI detected low engagement score or reports that the current performance is within range.

Allows students to log their engagement and shows teachers currently disengaged students and other identified issues.

The web-page mode includes the following tabs:

Dashboard

Provides teachers their class’s and students their personal report involving statistics on performance, engagement, time spent, and progress.

Calendar

Allows teachers to see the see assignment due dates for a specific class and students to see all their assignment due dates.

Engagement Center

Enables teachers and students to view a list of denied, confirmed, and unanswered AI recommendations and self-reported engagement logs.

Gives students the option to report their engagement with a course, unit, and lesson.

Recommendations

Provides recommendations to further enhance the online learning process grouped by and based on performance, engagement, and other identified concerns.

Allows users to submit their own recommendations for a class, unit, and lesson along with any associated files or links.

Feedback

Allows users to provide feedback on EngageEdu’s helpfulness, impact, performance, and any other issues.

Information Page

Informs users what EngageEdu is, how it can help them, and how the AI measures engagement.

Provides students with information regarding privacy, student data usage, and what their teachers can see.

High Fidelity Prototype

While developing our design and prototype we uncovered several major design challenges that needed to be addressed and came up with the following solutions:

The system may mark a student as disengaged when in actuality they are taking a break.

Solution: The AI notifies students when it detects they are disengaged and allows them to confirm if this is correct or if it’s something else.

Students may have privacy concerns regarding what all teachers can see.

Solution: We design an information page that is transparent regarding what all EngageEdu tracks and what teachers can see.

The students and teachers may not trust an AI to make decision and will want to know what it’s based on.

Solution: Include a section on the information page that details on how our AI measures engagement.

Identified Challenges

We made sure our design reflected the guidelines we found previously to be most important:

Time services based on context

EngageEdu sends push alerts only if the AI detects a student is inactive, disengaged, or frequently switching tabs and otherwise doesn’t forcefully interrupt the user.

Show contextually relevant information

EngageEdu bases its recommendations on the users’ context regarding online learning such as past and current performance, engagement, and behavior.

Support efficient invocation

We made it easy to invoke EngageEdu as needed by simply clicking on the users’ web-page extension list and selecting EngageEdu.

Make clear why the system did what it did

The AI lists reasons for why it recommends certain actions and resources by listing factors that impacted the engagement score and categorizing recommendations based on performance, engagement, and other identified concerns.

Encourage granular feedback

There are multiple ways EngageEdu asks for users’ feedback including prompting the user to agree with or deny AI identified concerns and recommendations and a tab that is easily accessible in both side panel and web-page mode dedicated for users to submit feedback.

Meeting HAX Guidelines

The next phase in the project would be to conduct usability testing for the prototype and implement the feedback. After that would be 2-4 more rounds of testing depending on the feedback we receive. We would then partner with the engineering team develop the necessary code for the UI/UX elements, functions, and the machine learning models. We would need to develop two types of machine learning models: a supervised learning model that can generate engagement scores and an unsupervised machine learning model that can generate recommendations based on the scores and detected patterns. Once completed we would transition into more rounds of user testing and revisions. We would then release EngageEdu after sufficient testing and revisions.

Next Steps

The timeline for coming up with a concept and developing a prototype was only three weeks which didn’t leave us with enough time for user testing prior to developing a high-fidelity prototype. Had we more time, we would’ve had us develop a low-fi or mid-fi prototype and done user testing. Another change I’d have like to look into at the beginning is further differentiating the teachers’ version from the students’ version. EngageEdu is designed to have the majority of the same functions for both versions but it is common for online learning platforms to have noticeable visual variations between student and teacher versions. I’d like us to have included this as part of our competitive analysis.

reflections

EngageEdu

Online learning has become more prevalent in recent years in secondary education. Current online education platforms have begun to leverage AI tools in an effort to better facilitate student learning. But despite these tools, online learning still brings a myriad of challenges that can be combated with AI technology. My team identified that a frequently cited concern with online education is student disengagement. We wanted to address this issue and came up with an AI solution we named EngageEdu.

The goal of this project was to address the problem of student disengagement in online learning by designing a novel AI system. We aimed to leverage machine learning to identify student disengagement, measure student engagement, and provide teachers and secondary students with recommendations that help raise students’ engagement.

Description

UX/UI Designer

Researcher

Role

We started by conducting background research on student disengagement with online learning, how to define engagement, and how to measure student engagement. We identified several important points:

Studies have found that engagement in online learning is low which directly impacts performance and student’s readiness to advance to the next grade.

Teachers struggle with assessing engagement during online learning and how to combat it.

Student engagement can be defined as the degree of interest, attention, curiosity, motivation, progress, optimism and passion shown by students while learning or being taught.

Key indicators of engagement include gaze, facial expressions, frequency and duration of interaction, previous scores, attendance, and behaviors.

Background Research

Alesandra Baca

Yesenia Garcia

Marcus Hanania

Team

We looked at frequently used online education tools that have implemented AI features and found that none of them targeted student engagement. Each of the existing AI features within online education tools either dealt with content creation or personalizing online learning.

Identified competitors and what they offer:

Khan Academy - AI tutoring, AI lesson planning.

Unacademy - AI generated content creator.

Quizlet - AI tutor, AI enhanced question solutions, AI generated flashcards from students’ notes.

Competitive Analysis

After conducting background research and examining AI tools currently used with online learning platforms, we developed a system schema for our AI application. The schema illustrates the inputs our machine learning algorithm takes in, runs through the algorithm, and the outputs generated.

Schema

We created several storyboards illustrating scenarios of users’ experience using EngageEdu.

Our first storyboard shows a student who has become disengaged with online learning. The AI detected they are disengaged and asks the student if they are struggling and feeling disengaged. The student confirms they are having trouble and are disengaged. The AI then provides suggestions to help the student which then allowed the student to re-engage and understand the concept.

The second storyboard entails a teacher receiving a notification that the class engagement with lesson 5 is low. She opens detailed reports generated by EngageEdu and is able to identify that lessons with videos and interactive components have higher engagement and those like lesson 5 without any tend to have lower engagement. She uses this information to reshape her lesson plans in a way that fosters higher engagement.

Our third storyboard shows a teacher receiving a notification from EngageEdu that a specific student is disengaged and may need help. The AI explains it’s reasoning for why the student was marked as disengaged and asks the teacher to judge whether the student is disengaged based off the reasons given by the AI. The teacher agrees the student is disengaged and decides to accept the AI’s recommendation to reach out to the student to check in. This leads to the student getting back on track.

The fourth scenario shows a teacher who came across a video they believe is relevant to the current unit the students are on. They want to recommend it to their entire class so they open EnageEdu’s webpage mode and navigate to the tab for inputting recommendations. They paste the link and type a description of the video then submits the recommendation. Now the video pops up as a recommendation for his entire class.

Storyboards

The next step in the design process was to determine guidelines for designing out human-AI interaction. Our team followed Microsoft’s HAX guidelines for designing our AI’s user experience and decided to prioritize the following guidelines:

Time services based on context

Show contextually relevant information

Support efficient invocation

Make clear why the system did what it did

Encourage granular feedback

Design Guidelines

The solution we developed was an extension that leverages AI machine learning to monitor student engagement and provide recommendations to students and teachers in order to better students’ online learning. We developed a separate version for teachers and students in order to take into consideration the different needs these two user groups have. EngageEdu includes two modes: a side panel mode that can be displayed while using current online learning platforms and a web-page mode that contains more capabilities and details.

The side panel mode includes the following tabs:

Engagement Summary

Allows teachers to see their class’s and students to see their personal engagement scores and the reasoning for the past three units along with a couple recommendations underneath.

Engagement Status

Prompts teachers and AI identified disengaged students to confirm or deny the AI detected low engagement score or reports that the current performance is within range.

Allows students to log their engagement and shows teachers currently disengaged students and other identified issues.

The web-page mode includes the following tabs:

Dashboard

Provides teachers their class’s and students their personal report involving statistics on performance, engagement, time spent, and progress.

Calendar

Allows teachers to see the see assignment due dates for a specific class and students to see all their assignment due dates.

Engagement Center

Enables teachers and students to view a list of denied, confirmed, and unanswered AI recommendations and self-reported engagement logs.

Gives students the option to report their engagement with a course, unit, and lesson.

Recommendations

Provides recommendations to further enhance the online learning process grouped by and based on performance, engagement, and other identified concerns.

Allows users to submit their own recommendations for a class, unit, and lesson along with any associated files or links.

Feedback

Allows users to provide feedback on EngageEdu’s helpfulness, impact, performance, and any other issues.

Information Page

Informs users what EngageEdu is, how it can help them, and how the AI measures engagement.

Provides students with information regarding privacy, student data usage, and what their teachers can see.

Prototype

While developing our design and prototype we uncovered several major design challenges that needed to be addressed and came up with the following solutions:

The system may mark a student as disengaged when in actuality they are taking a break.

Solution: The AI notifies students when it detects they are disengaged and allows them to confirm if this is correct or if it’s something else.

Students may have privacy concerns regarding what all teachers can see.

Solution: We design an information page that is transparent regarding what all EngageEdu tracks and what teachers can see.

The students and teachers may not trust an AI to make decision and will want to know what it’s based on.

Solution: Include a section on the information page that details on how our AI measures engagement.

Identified Challenges

We made sure our design reflected the guidelines we found previously to be most important:

Time services based on context: EngageEdu sends push alerts only if the AI detects a student is inactive, disengaged, or frequently switching tabs and otherwise doesn’t forcefully interrupt the user.

Show contextually relevant information: EngageEdu bases its recommendations on the users’ context regarding online learning such as past and current performance, engagement, and behavior.

Support efficient invocation: We made it easy to invoke EngageEdu as needed by simply clicking on the users’ web-page extension list and selecting EngageEdu.

Make clear why the system did what it did: The AI lists reasons for why it recommends certain actions and resources by listing factors that impacted the engagement score and categorizing recommendations based on performance, engagement, and other identified concerns.

Encourage granular feedback: There are multiple ways EngageEdu asks for users’ feedback including prompting the user to agree with or deny AI identified concerns and recommendations and a tab that is easily accessible in both side panel and web-page mode dedicated for users to submit feedback.

HAX Guidelines

The next phase in the project would be to conduct usability testing for the prototype and implement the feedback. After that would be 2-4 more rounds of testing depending on the feedback we receive. We would then partner with the engineering team develop the necessary code for the UI/UX elements, functions, and the machine learning models. We would need to develop two types of machine learning models: a supervised learning model that can generate engagement scores and an unsupervised machine learning model that can generate recommendations based on the scores and detected patterns. Once completed we would transition into more rounds of user testing and revisions. We would then release EngageEdu after sufficient testing and revisions.

Next Steps

The timeline for coming up with a concept and developing a prototype was only three weeks which didn’t leave us with enough time for user testing prior to developing a high-fidelity prototype. Had we more time, we would’ve had us develop a low-fi or mid-fi prototype and done user testing. Another change I’d have like to look into at the beginning is further differentiating the teachers’ version from the students’ version. EngageEdu is designed to have the majority of the same functions for both versions but it is common for online learning platforms to have noticeable visual variations between student and teacher versions. I’d like us to have included this as part of our competitive analysis.

reflections

This doesn’t have to be goodbye.

Send me a message at leahwilliams2019@gmail.com and let’s start a conversation.

This doesn’t have

to be goodbye.